Data centers have been receiving pushback from communities (Joliet, IL, Lowell, MA). More than $64 billion in data center projects were delayed or canceled over concerns like energy and water use as well as negative externalities like noise, fuel storage, light, etc.

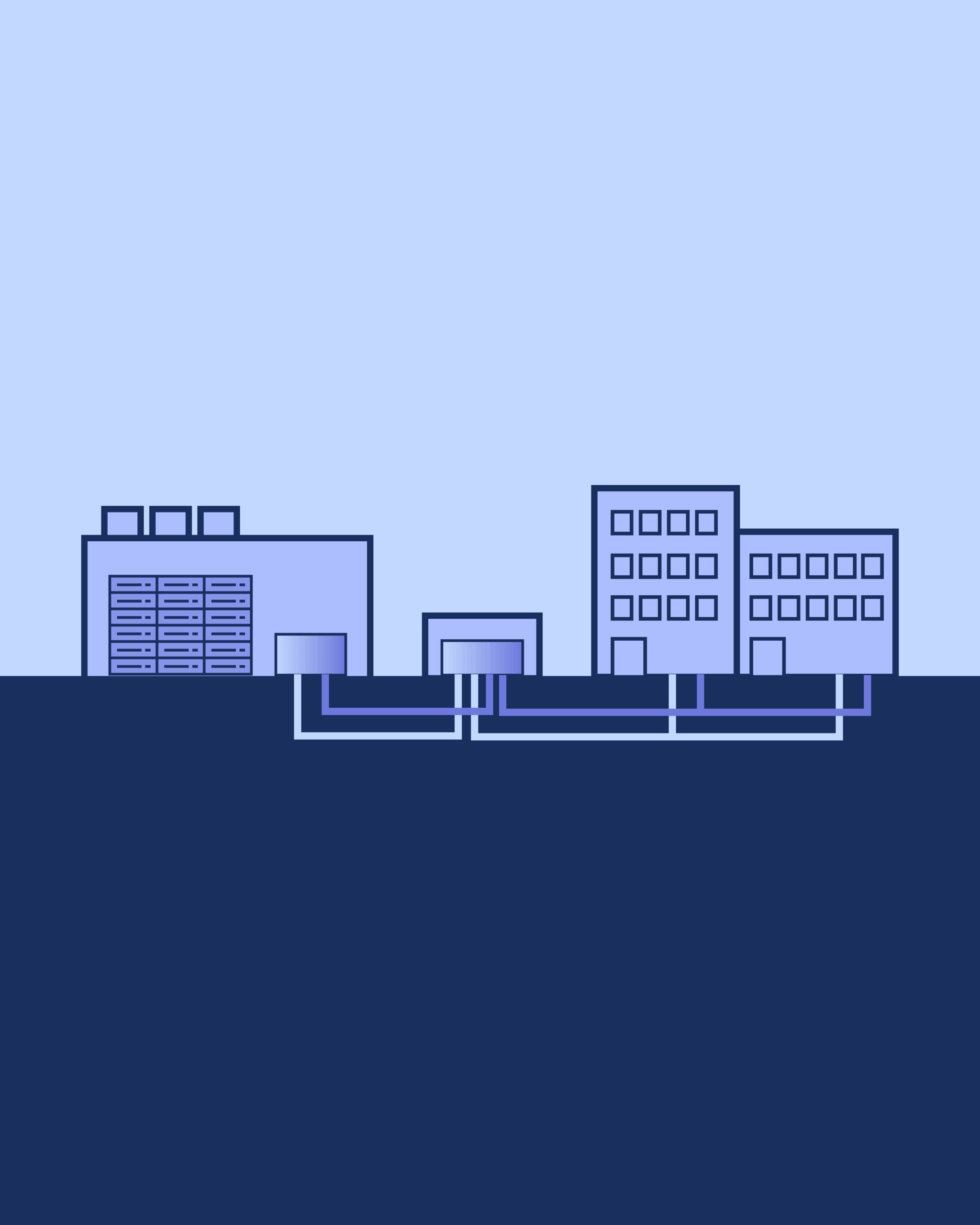

However, there is precedent for mid-size data centers being beneficial neighbors. If a community accepts a data center, it should be under the condition that the data center’s waste heat be available for district heating and/or industrial processes, such as heating schools, swimming pools, and apartments.

Data centers are not going anywhere. They are the fastest-growing building category in the world. Cushman & Wakefield shows US data center construction surged from $8.5 billion in 2019 to over $31 billion in 2024 (while general office construction dropped from $71 billion to $50 billion). So, it is worth thinking about how they fit into our cities. This post explores the issues and how European cities are using data center heat.

Nearly all the electricity that enters a data center leaves as heat.

A data center is, thermodynamically, a space heater that does math. Every watt of electricity, through every chip, fan, and backup battery, ultimately becomes thermal energy. A 100 MW data center is a 100 MW thermal emitter, of which at least 50% could be captured as reusable heat. Air-cooled exhaust runs 25 to 35 degrees C (77 to 95 degrees F). Water-cooled systems deliver 50 to 60 degrees C (122 to 140 degrees F). Two-phase immersion cooling, where servers are submerged in dielectric fluid that boils and recondenses, reaches up to 90 degrees C. Typical exhaust lands around 38 degrees C.

How do these temperatures work as an input to another process? Older district heating networks require very hot water. However, modern, energy-efficient networks are designed for 50°C to 70°C (122°F to 158°F). Data center waste heat at 30 to 60 degrees C is ideal for district heating, greenhouse agriculture, aquaculture, and swimming pools.

Cities are already heating homes with server exhaust.

This is infrastructure operating at scale, heating real homes in real cities. At the large end, data centers connected to city-scale pipe networks.

In Espoo and Kirkkonummi, Finland, 250,000 people get their heat from servers. Microsoft and Fortum built a 350 MW thermal system that will supply roughly 40 percent of all district heat for the area, reducing CO2 emissions by 400,000 tonnes per year. Seventy-five percent of the waste heat gets used annually.

Stockholm takes a multi-source approach. Stockholm Exergi’s Open District Heating network connects over 30 data centers to 3,000 km of pipes, warming 30,000 apartments. The utility pays operators roughly 2 million SEK per year per megawatt delivered (about $190,000). No single data center is critical. If one shuts down, others absorb the load. That redundancy makes the system investable in a way that a single-source network is not (Stockholm Exergi; EU Covenant of Mayors).

In Hamina, Finland, Google built a data center on the site of a former paper mill, cooled by Baltic seawater. It now provides 80 percent of local district heating, free of charge. For a small Finnish town, the data center is the heating system (Google Blog).

Here are some mid-size data centers being good neighbors in communities:

- Microsoft/Fortum — Espoo & Kirkkonummi, Finland — 350 MW thermal — 250,000 clients

- Stockholm Exergi — Stockholm, Sweden — 30+ DCs, individual sites 5–32 MW — 30,000 apartments

- Google Hamina — Hamina, Finland — 140 MW — Town-scale

- AWS Tallaght — Dublin, Ireland — 3 MW waste heat supplied — University-scale

- AECOM/London potential — London, UK — ~1,000–1,100 MW total London DC estate — 500,000 homes (potential)

Why is this not happening in the US? From the US data center operator’s perspective, interconnection takes longer and waste heat revenue may not offset the premium. The operator captures cheap power and externalizes the cost of wasted heat and community disruption, and transmission buildout. There is also not typically any existing district heating infrastructure. Stockholm has 3,000 km of pipes whereas US cities have nothing comparable. We heat buildings individually with gas furnaces, heat pumps, or electric resistance.

That being said, if a mid-size data center really wants to come to your city, you can stipulate conditions on how that land use arrives with zoning. Tangible benefits could include free heating for schools, as Google provides in Hamina and AWS provides in Tallaght. But you have to design the data center into the community from the start.

What a city should actually do.

Chicken and the egg problem. Data centers should not vent recoverable heat to the atmosphere while their neighbors burn gas for warmth. However, the absence of district heating is a cold-start problem. Requiring full waste heat connection before operations begin adds years and cost to project timelines. Instead, a condition could be connection points to future district heating networks, which would also give the host community time to set up receiving infrastructure (which might also be part of a community benefits agreement). The connection comes when the network reaches them, not as a precondition for switching on the servers, similar to requiring sewer connections where a treatment plant is planned but not yet built.

Anchor the heat network with public buildings. The Tallaght model is the clearest demonstration of how to solve the cold-start problem. AWS provides heat free of charge to 47,000 square meters of public buildings, 135 apartments, and 3,000 square meters of commercial space in South Dublin. Savings: 1,500 tonnes CO2 per year, a 60 percent reduction. The trick was sequencing. Public buildings provided the guaranteed demand that made the district heating loop viable from day one. Libraries, community centers, social housing, schools: each one a baseload customer that does not negotiate seasonal discounts or threaten to switch suppliers. Identify a cluster of municipal buildings within 1 mile of a proposed or existing data center. Commit them as anchor customers (About Amazon; SEAI).

Establish the governance structure before the data center arrives. In Tallaght, South Dublin County Council created Heatworks, Ireland’s first not-for-profit energy utility. The community owns the pipes. The data center is a heat supplier, not a monopolist. If the supplier changes, the pipes remain. What happens if the data center closes? The same thing that happens when a power plant retires from a district heating network: backup sources maintain continuity through the contract. Gas boilers or large heat pumps carry the load until a new supplier connects. The logic mirrors municipal water utilities: public distribution, private supply through regulated contracts (CNBC). The Heatworks model deserves replication because it separates ownership of the network from dependence on any single heat source. That separation is what makes long-term investment rational.

Performance standards for other concerns. Approval conditions also need to address what data centers actually do to their immediate neighbors. Performance standards should address air quality limits on diesel generator testing and run hours, noise limits at the property line, full-cutoff lighting requirements to prevent light trespass, written utility capacity verification confirming the load can be absorbed without degrading service to existing customers, and water consumption documentation disclosing annual usage and source. The scale of the data center also matters. The negative externalities of a gigawatt data center are much harder to control within a community than mid-size data centers in the range of 2-50 MW.